Inicio /

Insights /

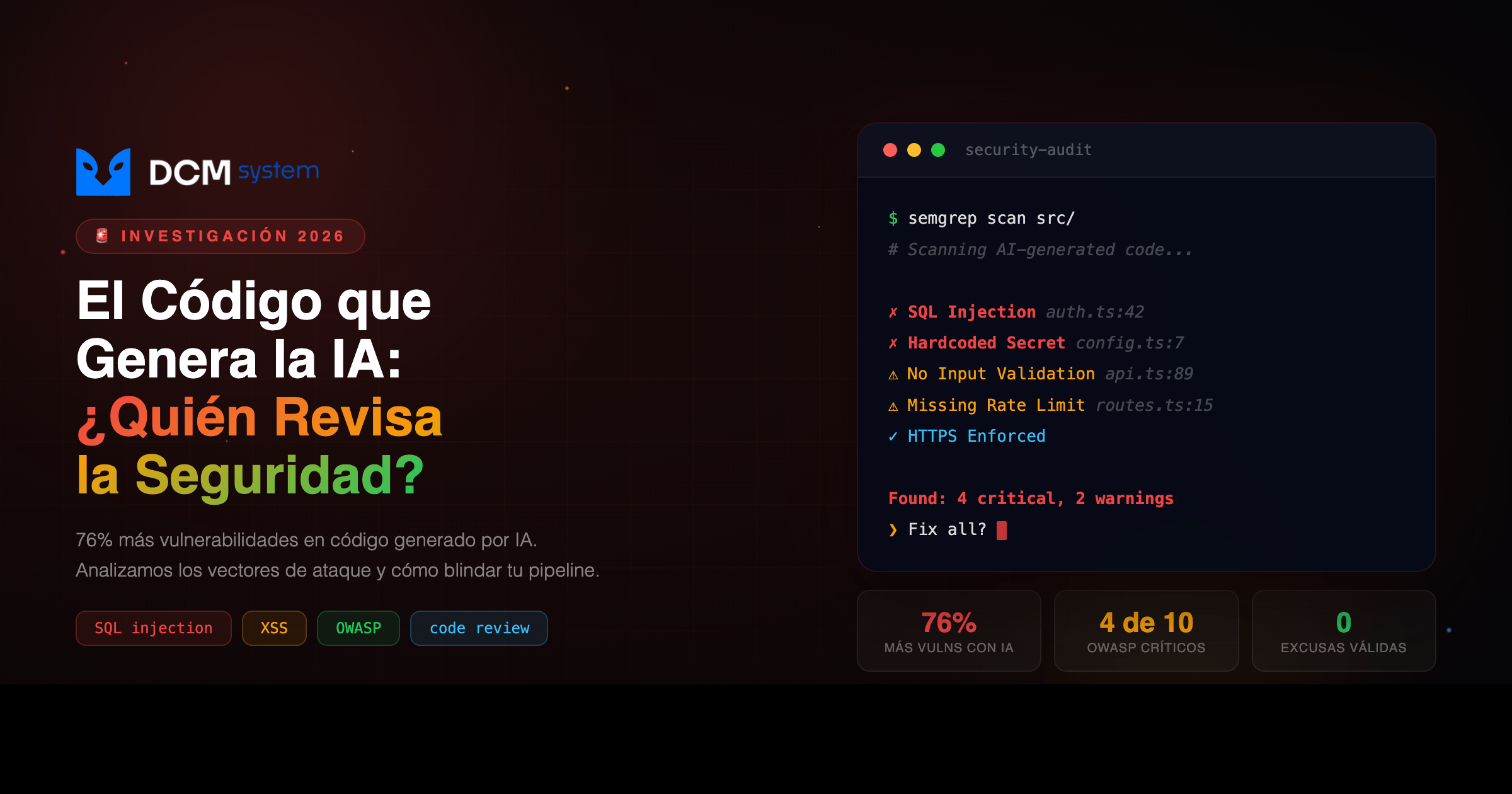

El Código que Genera la IA: ¿Quién Revisa la Seguridad?AI-Generated Code: Who's Reviewing the Security?

IASeguridadCode ReviewDesarrolloVulnerabilidades

El Código que Genera la IA: ¿Quién Revisa la Seguridad?

AI-Generated Code: Who's Reviewing the Security?

8 de abril de 2026

10 min

DCM System

IA Genera, ¿Quién Revisa?

Tu equipo deploya código de IA en minutos. Nadie lo audita. Tres semanas después, el breach.

[DATO REAL]: El 56% de los desarrolladores admite que las herramientas de IA generan código con errores de seguridad — y el 80% no aplica políticas de seguridad consistentes al output. (Snyk, AI Code Security Report)

En este artículo vas a:

- Entender por qué el código de IA tiene más vulnerabilidades que el humano

- Ver los 5 patrones de ataque más comunes en outputs de LLMs (con código real)

- Conocer el framework de 5 capas que aplica DCM para asegurar cada línea

- Saber si tu empresa necesita auditar lo que ya desplegó

01 EL PROBLEMA REAL

Tu equipo acaba de deployar un microservicio nuevo. Lo escribieron en dos horas con Copilot, ChatGPT y unos prompts bien redactados. Los tests pasan. El CI está verde. El PM está feliz. Producción.

Tres semanas después, un script kiddie de 16 años le mete una inyección SQL por un endpoint que nadie revisó. Tu base de datos de clientes — nombres, correos, contraseñas hasheadas con MD5 porque así lo generó el LLM — está en un paste de Telegram. Tu equipo, el mismo que se sentía seguro porque “la IA lo generó bien”, apaga incendios a las 3 AM.

No es ciencia ficción. Stanford lo documentó (Perry et al., IEEE S&P 2023): los desarrolladores que usaron IA produjeron código con significativamente más vulnerabilidades — y reportaron sentirse más confiados en que era seguro.

PIÉNSALO ASÍ

Es como manejar borracho convencido de que estás manejando mejor que nunca. La confianza sube, el desempeño real baja.

| Antes (sin IA) |

El costo oculto |

Después (con IA sin revisión) |

| Código lento pero auditado |

Velocidad × descuido |

Código rápido con brechas activas |

02 POR QUÉ PASA

Los LLMs aprenden de repositorios públicos. En GitHub hay millones de líneas de código vulnerable: tutoriales, prototipos, demos que nunca llegaron a producción. El modelo no distingue entre código seguro e inseguro — optimiza para que el código compile y funcione, no para que resista un ataque.

Resultado: el modelo genera SQL con interpolación directa, secrets hardcodeados, criptografía con MD5, dependencias alucinadas. Y lo hace con confianza, sin warnings.

Gartner proyecta que para 2026 más del 80% del código nuevo incluirá componentes generados por IA. GitClear ya reportó un aumento del 39% en code churn correlacionado con adopción de IA — más código, más rápido, más superficie de ataque sin revisar. Y Purdue University encontró que el 52% de las respuestas de código de ChatGPT contienen errores. Lanzar una moneda te da mejores probabilidades.

03 LA SOLUCIÓN

El problema no es la IA. Es la ausencia de proceso entre “el modelo lo generó” y “está en producción”.

Estos son los 5 patrones de vulnerabilidad que aparecen una y otra vez en outputs de LLMs:

1. Inyección SQL (CWE-89)

// VULNERABLE — interpolación directa

const query = `SELECT * FROM users WHERE username = '${username}'`;

// Atacante mete: ' OR '1'='1' -- → acceso a toda la tabla

// SEGURO — consulta parametrizada

const query = 'SELECT id, username FROM users WHERE username = $1';

await db.query(query, [username]);

2. XSS (CWE-79) — renderizar HTML del usuario sin sanitizar:

// VULNERABLE

res.send(`<h1>Bienvenido, ${req.query.name}</h1>`);

// SEGURO

import escapeHtml from 'escape-html';

res.setHeader('Content-Security-Policy', "default-src 'self'");

res.send(`<h1>Bienvenido, ${escapeHtml(req.query.name || '')}</h1>`);

3. Secrets hardcodeados (CWE-312) — el LLM pone las credenciales en texto plano porque así lo vio en millones de ejemplos de entrenamiento. Solución: variables de entorno siempre, .env nunca al repo.

4. Criptografía débil (CWE-327) — MD5, SHA1, AES-ECB, IVs estáticos. El LLM los genera porque son comunes en el training data. Usa bcrypt/argon2 para passwords y AES-256-GCM con IV aleatorio para cifrado.

5. Slopsquatting — los LLMs alucinan nombres de paquetes en más del 30% de las consultas. Un atacante registra ese nombre ficticio en npm/PyPI con un postinstall que roba tus variables de entorno. Un npm install y todas tus API keys se fueron, sin warnings.

Hay un factor transversal que ningún benchmark captura: el LLM no conoce tu arquitectura. No sabe que tu base de datos tiene 50 millones de registros, que tu API requiere idempotency keys, ni que tu regulación local exige que los datos no salgan del país. Genera código genérico. Y código genérico en una arquitectura específica es código roto esperando un trigger.

04 CÓMO IMPLEMENTARLO

El framework de 5 capas que aplicamos en DCM para cada línea de código que toca un LLM:

-

Prompts defensivos — incluye requisitos de seguridad en el prompt antes de generar. Si necesitas un endpoint de login, especifica: consultas parametrizadas, rate limiting (5 intentos/IP/15 min), bcrypt, JWT con expiración de 1h, headers HSTS/CSP.

-

Análisis estático automatizado — antes de que un humano vea el código, corre Semgrep (--config=p/owasp-top-ten), SonarQube o Bandit (Python). Si detecta algo, no pasa.

-

Auditoría de dependencias — npm info nombre-paquete antes de instalar cualquier cosa sugerida por el LLM. Socket.dev para detectar supply chain attacks. npm audit + Snyk en el pipeline.

-

Revisión humana con checklist — input validation, queries parametrizadas, cero secrets hardcodeados, algoritmos de crypto actuales, tokens con expiración, rate limiting, error handling sin stack traces, logs sin datos sensibles, CORS/CSP configurados.

-

Gates en CI/CD — SAST + dependency check + secrets scanning con Gitleaks + container scanning con Trivy. Si cualquiera falla, el deploy se bloquea. Sin excepciones, sin bypass.

05 ¿ES PARA TI?

Sí, si tu empresa:

- ✅ Ya usa IA (Copilot, ChatGPT, Cursor) para generar código que llega a producción

- ✅ No tiene un pipeline de seguridad automatizado revisando ese output

- ✅ Creció rápido con IA y ahora tiene deuda técnica que nadie auditó

No, si:

- ❌ Tu equipo ya tiene SAST, revisión humana con checklist y auditoría de dependencias en cada PR

- ❌ Tu código no toca datos de usuarios ni infraestructura crítica (prototipo interno, demos)

Preguntas frecuentes

¿Es suficiente con que el dev que generó el código lo revise él mismo?

No. El mismo dev que generó el código tiene el mismo punto ciego que el LLM — no ve lo que no sabe que no sabe. La revisión debe hacerla alguien con contexto de seguridad y del sistema, distinto al autor.

¿Los LLMs van a mejorar y dejar de generar código vulnerable?

Parcialmente. Modelos más nuevos cometen menos errores obvios. Pero el problema fundamental — falta de contexto de tu arquitectura, compliance y modelo de amenazas — no se resuelve con más parámetros. Se resuelve con proceso.

¿Qué pasa si ya tenemos código generado por IA en producción sin haberlo auditado?

Prioriza una auditoría de los endpoints de autenticación, manejo de datos de usuarios y dependencias instaladas. Son los vectores de mayor riesgo. Después implementa el pipeline preventivo.

Acción inmediata: Corre semgrep --config=p/owasp-top-ten src/ en tu repositorio hoy. Si tienes hits, ya sabes por dónde empezar. Tarda menos de 10 minutos instalarlo.

¿Quieres ayuda? → Habla con DCM — llevamos más de 12 años construyendo software que no se hackea.

AI Generates. Who Reviews?

Your team ships AI-generated code in minutes. Nobody audits it. Three weeks later: the breach.

[REAL DATA]: 56% of developers admit that AI tools generate code with security flaws — and 80% do not apply consistent security policies to that output. (Snyk, AI Code Security Report)

In this article you will:

- Understand why AI-generated code carries more vulnerabilities than human-written code

- See the 5 most common attack patterns in LLM output (with real code examples)

- Learn the 5-layer framework DCM applies to secure every line

- Know whether your company needs to audit what it has already deployed

01 THE REAL PROBLEM

Your team has just deployed a new microservice. They wrote it in two hours using Copilot, ChatGPT, and a few well-crafted prompts. Tests pass. CI is green. The PM is happy. Production.

Three weeks later, a 16-year-old script kiddie injects SQL through an endpoint nobody reviewed. Your customer database — names, emails, passwords hashed with MD5 because that is what the LLM produced — is in a Telegram paste. Your team, the same team that felt secure because “the AI generated it correctly,” is putting out fires at 3 a.m.

This is not science fiction. Stanford documented it (Perry et al., IEEE S&P 2023): developers using AI assistants produced significantly more vulnerable code — and reported feeling more confident that it was secure.

THINK OF IT THIS WAY

It is like driving drunk and convinced you are driving better than ever. Confidence rises while actual performance falls.

| Before (without AI) |

The hidden cost |

After (AI without review) |

| Slower code, but audited |

Speed × carelessness |

Fast code with active gaps |

02 WHY IT HAPPENS

LLMs learn from public repositories. GitHub holds millions of lines of vulnerable code: tutorials, prototypes, demos that never made it to production. The model does not distinguish between secure and insecure code — it optimizes for code that compiles and runs, not for code that withstands an attack.

The result: the model generates SQL with direct interpolation, hardcoded secrets, MD5-based cryptography, hallucinated dependencies. And it does so confidently, with no warnings.

Gartner projects that by 2026, more than 80% of new code will include AI-generated components. GitClear has already reported a 39% increase in code churn correlated with AI adoption — more code, faster, more attack surface left unreviewed. And Purdue University found that 52% of ChatGPT’s answers to programming questions contain errors. Flipping a coin gives you better odds.

03 THE SOLUTION

The problem is not the AI. It is the absence of process between “the model generated it” and “it is in production.”

These are the five vulnerability patterns that surface again and again in LLM output:

1. SQL Injection (CWE-89)

// VULNERABLE — direct interpolation

const query = `SELECT * FROM users WHERE username = '${username}'`;

// Attacker sends: ' OR '1'='1' -- → access to the entire table

// SECURE — parameterized query

const query = 'SELECT id, username FROM users WHERE username = $1';

await db.query(query, [username]);

2. XSS (CWE-79) — rendering user-supplied HTML without sanitization:

// VULNERABLE

res.send(`<h1>Welcome, ${req.query.name}</h1>`);

// SECURE

import escapeHtml from 'escape-html';

res.setHeader('Content-Security-Policy', "default-src 'self'");

res.send(`<h1>Welcome, ${escapeHtml(req.query.name || '')}</h1>`);

3. Hardcoded secrets (CWE-312) — the LLM places credentials in plaintext because that is how it saw them in millions of training examples. The fix: environment variables, always; .env never committed to the repo.

4. Weak cryptography (CWE-327) — MD5, SHA1, AES-ECB, static IVs. The LLM produces them because they are common in the training data. Use bcrypt or argon2 for passwords, and AES-256-GCM with a random IV for encryption.

5. Slopsquatting — LLMs hallucinate package names in over 30% of queries. An attacker registers that fictitious name on npm or PyPI with a postinstall script that exfiltrates your environment variables. One npm install and every API key is gone, with no warnings.

There is one cross-cutting factor no benchmark captures: the LLM does not know your architecture. It does not know your database holds 50 million records, that your API requires idempotency keys, or that local regulation forbids data leaving the country. It generates generic code. And generic code inside a specific architecture is broken code waiting for a trigger.

04 HOW TO IMPLEMENT IT

The 5-layer framework DCM applies to every line of code an LLM touches:

-

Defensive prompts. Embed security requirements in the prompt before generating. If you need a login endpoint, specify: parameterized queries, rate limiting (5 attempts per IP per 15 min), bcrypt, JWT with a 1-hour expiration, HSTS and CSP headers.

-

Automated static analysis. Before any human sees the code, run Semgrep (--config=p/owasp-top-ten), SonarQube, or Bandit (Python). If the scan flags anything, it does not pass.

-

Dependency auditing. Run npm info <package> before installing anything an LLM suggests. Use Socket.dev to detect supply chain attacks. Add npm audit and Snyk to the pipeline.

-

Human review with a checklist. Input validation, parameterized queries, zero hardcoded secrets, current cryptographic algorithms, expiring tokens, rate limiting, error handling without stack traces, logs without sensitive data, configured CORS and CSP.

-

CI/CD gates. SAST + dependency check + secrets scanning with Gitleaks + container scanning with Trivy. If any of them fails, the deploy is blocked. No exceptions, no bypass.

05 IS THIS FOR YOU?

Yes, if your company:

- ✅ Already uses AI (Copilot, ChatGPT, Cursor) to generate code that reaches production

- ✅ Has no automated security pipeline reviewing that output

- ✅ Grew quickly with AI and now carries technical debt nobody has audited

No, if:

- ❌ Your team already runs SAST, human review with a checklist, and dependency auditing on every PR

- ❌ Your code does not touch user data or critical infrastructure (internal prototype, demos)

Frequently asked questions

Is it enough for the developer who generated the code to review it themselves?

No. The same developer who generated the code shares the same blind spot as the LLM — they do not see what they do not know they do not know. The review must be done by someone with security context and system context, distinct from the author.

Will LLMs improve and stop producing vulnerable code?

Partially. Newer models make fewer obvious mistakes. But the underlying problem — lack of context about your architecture, compliance requirements, and threat model — is not solved by more parameters. It is solved by process.

What if we already have AI-generated code in production that was never audited?

Prioritize an audit of authentication endpoints, user data handling, and installed dependencies. Those are the highest-risk vectors. Then implement the preventive pipeline.

Immediate action: Run semgrep --config=p/owasp-top-ten src/ in your repository today. If you get hits, you already know where to start. It takes less than ten minutes to install.

Need help? → Talk to DCM — we have spent more than 12 years building software that does not get hacked.