IA Rompe tu Código

La IA ya escanea tu software en minutos — y no le importa si tienes un equipo de seguridad.

[DATO REAL]: En febrero de 2025, Thomas Ptacek — cofundador de Matasano Security, 25 años rompiendo sistemas por oficio — publicó “Vulnerability Research Is Cooked”. Su argumento: los LLMs ya encuentran vulnerabilidades reales a velocidad y escala que ningún equipo humano puede igualar. Cuando alguien así lo dice, prestas atención.

En este artículo vas a:

- Entender por qué el modelo mental de “nadie va a encontrar ese bug” está muerto

- Ver evidencia real: qué encontró Claude, qué automatizó OpenAI, qué colapsó el kernel de Linux

- Aprender las nuevas reglas del juego antes de que alguien las use contra ti

- Implementar escaneo automatizado de seguridad en tu CI/CD esta semana

01 EL PROBLEMA REAL

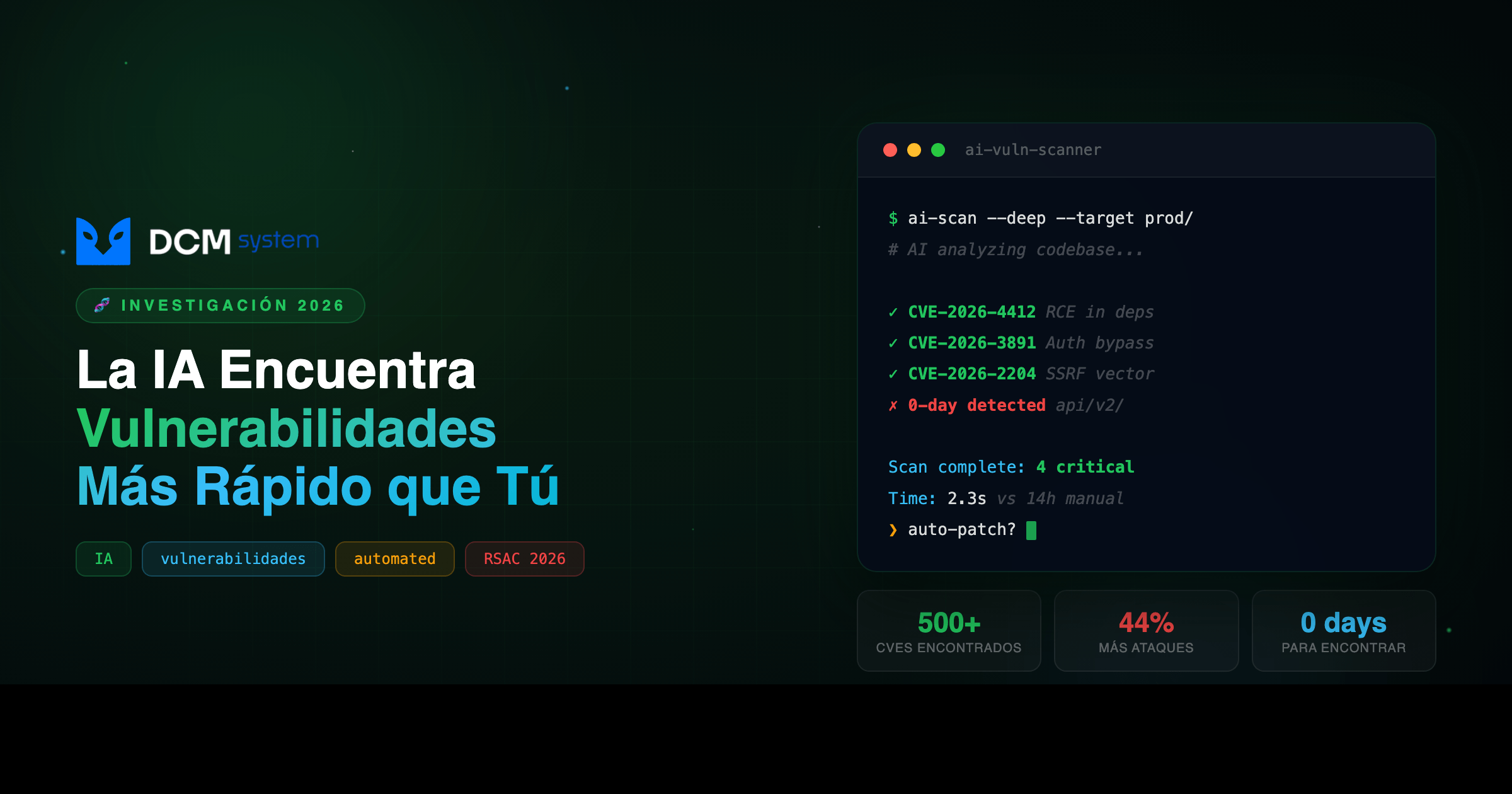

Un modelo de IA puede analizar un repositorio completo en minutos. No descansa, no se distrae, no cobra por hora. Anthropic reportó que Claude descubrió más de 500 vulnerabilidades en proyectos open-source ampliamente usados — buffer overflows, race conditions, inyecciones SQL, fallos de autenticación. No bugs triviales. Software que millones de personas usan cada día.

OpenAI construyó “Aardvark”, un pentester automatizado que le pasa un codebase al modelo y deja que busque fallos sistemáticamente. Un investigador senior revisa 500–1000 líneas por hora. Aardvark analiza repositorios completos en minutos.

IBM X-Force reportó un aumento del 44% en ataques vía aplicaciones públicas. RSAC 2026 — el evento de ciberseguridad más importante del mundo — estará dominado por un solo tema: IA aplicada a seguridad ofensiva. No es el futuro. Es ahora.

PIÉNSALO ASÍ

Antes, encontrar el bug oculto en tu código era como buscar una aguja en un pajar — requería semanas de un experto con café y paciencia. Ahora, la máquina pasa un imán por todo el pajar en cinco minutos y encuentra todas las agujas.

Antes El costo Después Semanas de auditoría manual El mismo bug Minutos de escaneo automatizado Pocos atacantes calificados Tu endpoint expuesto Herramientas al alcance de cualquiera “Nadie va a encontrar eso” Suposición válida Suposición que te va a costar caro

02 POR QUÉ PASA

Hay dos fuerzas actuando al mismo tiempo y se refuerzan mutuamente.

Primera: la IA democratizó las herramientas ofensivas. Antes necesitabas años de expertise para hacer pentesting serio. Hoy, un fuzzer inteligente basado en LLM genera payloads contextuales, analiza respuestas, detecta blind SQLi y variantes que un scanner tradicional no vería — todo en minutos, sin conocimiento experto de quien lo corre.

Segunda: la misma IA que encuentra vulnerabilidades también las crea. El código generado por LLMs tiene patrones predecibles: inyecciones SQL, secrets hardcodeados, criptografía débil, dependencias inventadas. Tu equipo genera código más rápido con IA, pero ese código tiene más superficie de ataque. Y los atacantes ya usan IA para escanearlo.

El resultado: la ventana entre “bug introducido” y “bug explotado” se redujo de meses a días u horas. Es una carrera armamentista. Si no estás del lado correcto, pierdes.

03 LA SOLUCIÓN

Hay que voltearse la ecuación: usar la misma IA que ataca para defenderte antes de que alguien más llegue.

Pero hay una trampa que nadie anticipó. Si la IA encuentra vulnerabilidades más rápido, eso significa más reportes. Muchos más. Y el ecosistema no estaba preparado.

Daniel Stenberg — creador de cURL, probablemente la herramienta de línea de comandos más utilizada en la historia — lo vivió en carne propia. La cantidad de reportes de vulnerabilidades se multiplicó. El problema: la gran mayoría son basura. Reportes generados por IA que suenan convincentes, tienen formato profesional, citan CVEs reales… y están completamente equivocados. El modelo alucina una vulnerabilidad con la confianza de un experto. Los mantenedores — que trabajan gratis en su tiempo libre — gastan horas verificando falsos positivos.

Los mantenedores del kernel de Linux enfrentan el mismo problema multiplicado por cien. Algunos reportes son reales y valiosos. Pero separarlos del ruido requiere el mismo expertise que se necesitaba para encontrar los bugs. Más señal útil, sí. Pero también exponencialmente más ruido.

La solución correcta no es solo correr más scanners — es integrarlos en tu proceso con supervisión humana que filtre el ruido y actúe sobre lo real.

El enfoque que funciona: escaneo automatizado en cada commit, revisión de ingenieros con criterio de negocio, fuzzing de APIs antes de cada release, auditoría de dependencias, y threat modeling actualizado trimestralmente que incluya vectores de IA.

04 CÓMO IMPLEMENTARLO

- Agrega escaneo de seguridad a tu CI/CD — esta semana, no en el próximo sprint:

# .github/workflows/security.yml

name: Security Gate

on: [push, pull_request]

jobs:

security-scan:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

# SAST — análisis estático

- name: Semgrep

uses: returntocorp/semgrep-action@v1

with:

config: >-

p/owasp-top-ten

p/javascript

p/typescript

# Dependencias vulnerables

- name: Audit dependencies

run: npm audit --audit-level=high

# Secrets que no deben estar en el repo

- name: Gitleaks

uses: gitleaks/gitleaks-action@v2

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

# Si CUALQUIERA falla, el PR no se mergea

- Audita tu superficie de ataque pública. Haz un inventario de cada endpoint, subdominio y servicio expuesto a internet. Si no sabes qué tienes afuera, no puedes defenderlo:

# Descubrimiento de subdominios

subfinder -d tudominio.com -o subdominios.txt

# Escaneo de puertos

nmap -sV -sC -oN scan_results.txt tudominio.com

# Fuzzing de endpoints

ffuf -w /usr/share/wordlists/common.txt \

-u https://tudominio.com/FUZZ \

-mc 200,301,302,403

- Revisa el código generado por IA con lupa. Cada pieza que sale de un LLM pasa por revisión humana con criterio de seguridad. El patrón más común que la IA introduce: concatenación de strings en SQL. Este endpoint parece funcional y pasa tests básicos — pero está roto:

// Código generado por IA — vulnerable a SQLi

app.get('/api/search', (req, res) => {

const { query, category } = req.query;

const sql = `SELECT * FROM products

WHERE name LIKE '%${query}%'

AND category = '${category}'`;

db.query(sql, (err, results) => res.json(results));

});

Un fuzzer con IA lo encuentra en menos de cinco minutos. No necesitas ser hacker elite para correrlo.

-

Actualiza tu modelo de amenazas. Si el último tiene más de 6 meses, está desactualizado. Incluye: IA como vector de ataque (fuzzing automatizado), IA como fuente de vulnerabilidades (código sin revisión), supply chain attacks potenciados por IA, y phishing hiper-personalizado.

-

Entrena a todo el equipo, no solo a los devs. El 90% de los breaches empiezan con un humano haciendo click donde no debía.

-

Mide el MTTR. Mean Time To Remediate. Ya no se trata de si van a encontrar un bug. Se trata de qué tan rápido lo parcheas.

05 ¿ES PARA TI?

Sí, si tu empresa:

- ✅ Tiene software en producción con endpoints públicos (API, app web, servicios)

- ✅ Usa IA para generar código o automatizar desarrollo

- ✅ Todavía asume que “nadie va a encontrar ese bug” porque la ruta no es obvia

No, si:

- ❌ No tienes ningún sistema expuesto a internet — sin superficie pública, el riesgo es menor

- ❌ Ya tienes escaneo automatizado en CI/CD, fuzzing periódico y threat modeling trimestral — vas bien, esto es confirmación, no novedad

Preguntas frecuentes

¿El escaneo automatizado reemplaza una auditoría de seguridad profesional? No. El escaneo automatizado encuentra lo que tiene patrones conocidos — OWASP Top 10, dependencias vulnerables, secrets expuestos. Una auditoría profesional encuentra fallos de lógica de negocio, vulnerabilidades de arquitectura y combinaciones de issues que solo un humano con contexto puede ver. Son complementarios, no sustitutos.

¿Es legal que la IA escanee mi propio código buscando vulnerabilidades? Sí, en tu propio código y en código open-source. El límite legal está en escanear sistemas de terceros sin autorización. Herramientas como Semgrep, CodeQL o Gitleaks en tu CI/CD son completamente legítimas y recomendadas.

¿Qué hago si el scanner reporta muchos falsos positivos? Esto es exactamente el problema que enfrentó el kernel de Linux con reportes generados por IA. La clave es configurar las reglas del scanner para tu contexto específico y asignar a alguien con criterio técnico para el triage — no delegar el triage al mismo que escribe el código.

Acción inmediata: Agrega Gitleaks a tu repo hoy. Tarda menos de 15 minutos y te dice inmediatamente si hay secrets expuestos en tu historial de commits — el tipo de vulnerability que más impacto tiene y menos tiempo toma encontrar.

¿Quieres que revisemos tu pipeline de seguridad? → Habla con DCM