Vibe Coding, Brechas Reales

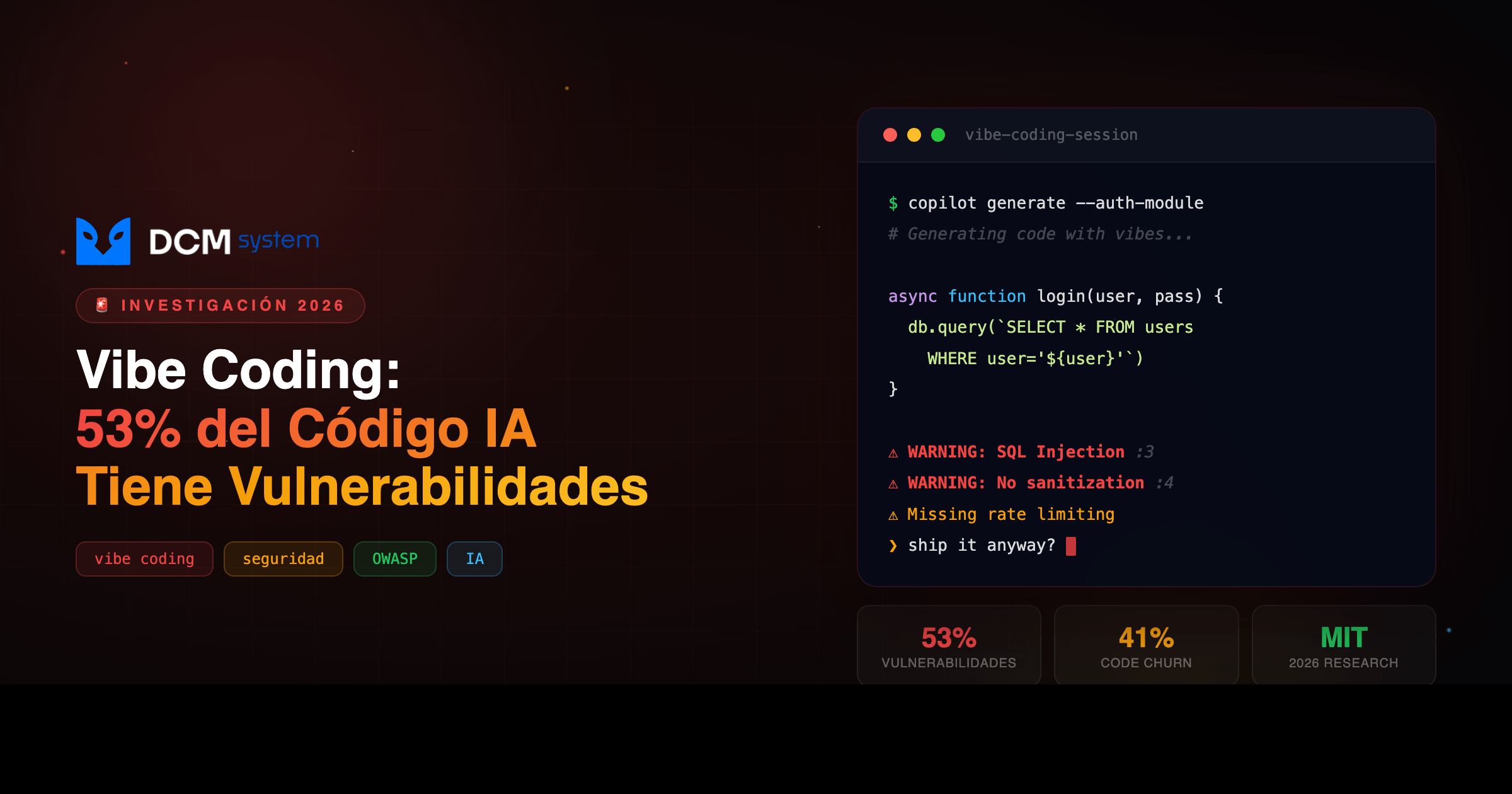

Programar por vibra es rápido y adictivo. El 53% de los equipos ya encontró vulnerabilidades en producción.

[DATO REAL]: Según Checkmarx y Palo Alto Networks, el 53% de los equipos que generan y despliegan código con IA ya encontraron vulnerabilidades de seguridad — después del deploy, con usuarios reales adentro.

En este artículo vas a:

- Ver los 4 patrones de vulnerabilidad más comunes que genera el vibe coding (con código real)

- Entender por qué el modelo nunca va a conocer tu arquitectura de seguridad

- Aplicar el framework de 5 capas de DCM para vibear rápido sin abrir brechas

- Saber qué puedes implementar hoy sin esperar a nadie

01 EL PROBLEMA REAL

Abres tu editor, le escribes al modelo “hazme un endpoint de login con JWT”, y en 30 segundos tienes algo que compila, responde y hasta se ve bonito. No tocaste una sola línea. Eso es vibe coding: programar por vibra, por intención, sin ensuciarte las manos con sintaxis.

La velocidad es real. La barrera de entrada desapareció. Startups lanzan MVPs en días. Desarrolladores senior saltean el boilerplate. Pero ese código que se siente mágico tiene un lado oscuro que casi nadie está viendo.

MIT Technology Review lo nombró una de las tecnologías revolucionarias de 2026. Fortune lo resumió: “In the Age of Vibe Coding, Trust Is the Real Bottleneck.” La velocidad ya no es el cuello de botella. La confianza en el output lo es.

PIÉNSALO ASÍ

Es como un piloto que deja de saber volar manual porque siempre usa autopiloto. Funciona — hasta que no funciona. Y cuando no funciona, estás a 10,000 metros de altura sin saber qué palanca tocar.

Antes El costo oculto Después Dev escribe y entiende cada línea Velocidad × falta de criterio Código rápido con brechas que nadie detectó

02 POR QUÉ PASA

Un modelo de lenguaje genera código prediciendo el siguiente token más probable basado en patrones estadísticos de su entrenamiento. No tiene un modelo mental de tu sistema.

El modelo optimiza para que funcione, no para que sea seguro. Cuando le dices “hazme un endpoint de login”, te da el endpoint más probable según millones de ejemplos — muchos de ellos tutoriales, proyectos de aprendizaje, prototipos, demos. Código que nunca fue diseñado para producción.

Lo que el modelo no tiene:

- Contexto de amenazas: no sabe qué vectores de ataque aplican a tu stack específico

- Conocimiento de compliance: no sabe si necesitas SOC 2, GDPR, o la normativa colombiana de habeas data

- Visión de arquitectura: no sabe cómo tu componente se integra con el resto del sistema

- Historial de incidentes: no conoce las vulnerabilidades que ya te han explotado antes

Investigadores de Stanford confirmaron el efecto en 2023: los desarrolladores que usaban asistentes de IA producían código significativamente menos seguro — y estaban más convencidos de que su código era seguro. Más confianza, más riesgo.

GitClear lo midió: proyectos con herramientas de IA tienen un 41% más de code churn — código que se escribe y se reescribe rápidamente. Más superficie de ataque, más líneas que nadie revisó con atención.

03 LA SOLUCIÓN

Estos son los 4 patrones de vulnerabilidad que el vibe coding produce de forma consistente:

1. Inyección SQL — el clásico que sigue vivo

# Lo que el modelo genera (VULNERABLE)

@app.route('/api/users')

def search_users():

name = request.args.get('name')

query = f"SELECT * FROM users WHERE name = '{name}'"

result = db.execute(query)

return jsonify([dict(row) for row in result])

# Atacante mete: ' OR '1'='1' -- → devuelve toda la tabla

# SEGURO — un parámetro, toda la diferencia

@app.route('/api/users')

def search_users():

name = request.args.get('name')

query = "SELECT * FROM users WHERE name = %s"

result = db.execute(query, (name,))

return jsonify([dict(row) for row in result])

2. Secrets en texto plano

# VULNERABLE — el modelo hardcodea credenciales porque así las vio en training

client = boto3.client('s3',

aws_access_key_id='AKIAIOSFODNN7EXAMPLE',

aws_secret_access_key='wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY')

GitHub detecta más de 1 millón de secretos expuestos por año en repositorios públicos. Con vibe coding, ese número solo crece.

3. Validación que no valida nada (Mass Assignment)

// "Hazme un formulario de registro con validación" — resultado:

app.post('/api/register', (req, res) => {

const { email, password, role } = req.body;

if (!email.includes('@')) return res.status(400).json({ error: 'Email inválido' });

// El modelo "validó" el email. Y aceptó cualquier rol que mande el usuario.

db.query('INSERT INTO users VALUES (?, ?, ?)', [email, password, role]); // role = "admin" 🤷

});

El modelo no entiende tu modelo de permisos. No sabe qué roles existen. Te dio exactamente lo que pediste — y nada más.

4. Deserialización insegura — la más sutil

# VULNERABLE — ejecución remota de código disfrazada de feature

@app.route('/api/session', methods=['POST'])

def restore_session():

data = request.json.get('session_data')

session = pickle.loads(base64.b64decode(data)) # ← RCE directo

return jsonify(session)

pickle.loads con datos del usuario es ejecución remota de código. Un atacante serializa un payload que ejecuta comandos arbitrarios en tu servidor.

04 CÓMO IMPLEMENTARLO

El framework de 5 capas de DCM para vibear rápido sin abrir brechas:

-

Prompts defensivos — no le pidas “hazme un endpoint”, dale contexto de seguridad explícito:

Genera un endpoint REST para buscar usuarios por nombre. Requisitos: consultas parametrizadas, validación de input, rate limiting, sin IDs internos en respuesta, errores sin detalles del sistema, logs de intentos fallidos para auditoría. -

Revisión automatizada pre-commit — SAST (Semgrep, CodeQL, SonarQube) + detección de secrets (TruffleHog, Gitleaks) como pre-commit hooks. Si hay un SQL concatenado o un secret hardcodeado, el código no pasa. No depende de que alguien lo note.

-

Code review con foco en seguridad — la automatización no puede ver la lógica de negocio, los flujos de autorización ni cómo se integra el componente. Cada PR pasa por un ingeniero revisando: autenticación/autorización, manejo de datos sensibles, puntos de entrada/salida, consistencia con la arquitectura global.

-

Testing de seguridad continuo en CI/CD — tests de inyección (verificar que cada input está parametrizado), tests de autorización (confirmar que cada endpoint respeta roles), tests de límites (payloads malformados, enormes, vacíos), DAST (probar la app en ejecución como un atacante). No son opcionales, no se saltan “porque es urgente”.

-

Monitoreo post-deploy — el deploy no es el final. Monitoreo de comportamientos anómalos en endpoints, alertas por tráfico sospechoso, revisión periódica de dependencias por CVEs, plan de respuesta a incidentes documentado y practicado.

05 ¿ES PARA TI?

Sí, si tu empresa:

- ✅ Usa vibe coding para deployar a producción sin una capa de revisión entre el output y el servidor

- ✅ No tiene pre-commit hooks de seguridad ni SAST en el pipeline

- ✅ Tiene MVPs lanzados rápido que ahora manejan datos de usuarios reales

No, si:

- ❌ Ya tienes SAST, detección de secrets y revisión humana con foco en seguridad en cada PR

- ❌ Tu código es solo para uso interno sin datos sensibles ni acceso a infraestructura crítica

Preguntas frecuentes

¿El modelo va a mejorar y dejar de generar vulnerabilidades? Parcialmente. Mejora en errores obvios. Pero el problema raíz — falta de contexto de tu arquitectura, tu modelo de amenazas y tu compliance — no se resuelve con más parámetros. Se resuelve con proceso.

¿Cuánto tarda implementar los pre-commit hooks? Un día. Configurar Semgrep + TruffleHog + pre-commit hooks toma entre 4-8 horas. Te salva meses de remediation después de un breach.

¿Trato el código de IA como código de producción o como prototipo? Trátalo como código de un junior talentoso: rápido, creativo, pero sin experiencia en producción. Necesita supervisión, guía y validación antes de llegar a usuarios reales.

Acción inmediata: Corre semgrep --config=p/security-audit src/ en tu repo hoy. Si tienes código generado por IA que llegó a producción sin auditarse, eso te muestra dónde están los agujeros. Menos de 30 minutos.

¿Quieres ayuda? → Habla con DCM — vibeamos rápido, pero desplegamos seguro.